We’ve all been there. An update is rolling out, all pre-flight checks are green, and yet everything falls apart after the update is released. Anyone in this position then inevitably asks the common question: “How can we avoid this?” Enter K8s Deployment Strategies. Check out the FlexDeploy integration if you already have a handle on Deployment Strategies.

What are Kubernetes Deployment Strategies?

Deployment Strategies (at least in the context we are going to talk about) revolve around Docker and Kubernetes (K8s). Consequently, check out these posts if you need a refresher:

Deployment Strategies focus on changing or upgrading applications without downtime or significantly impacting the user. By and large, this is accomplished by routing traffic to different Pods or versions within a K8s Deployment.

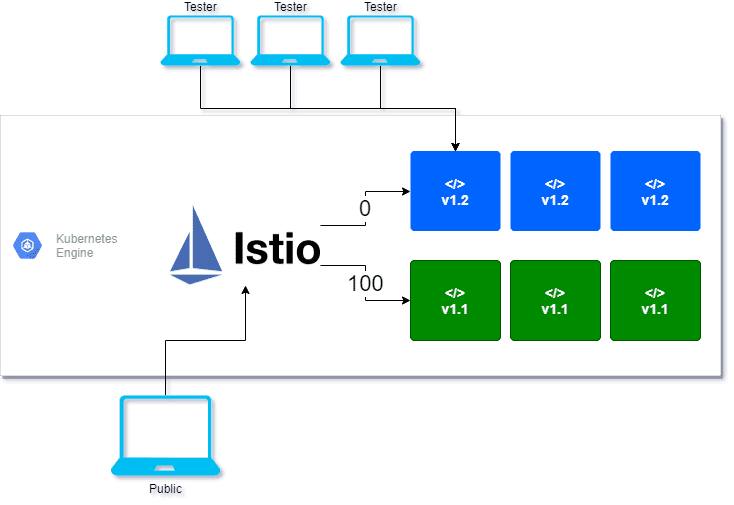

In order to have multiple versions deployed, we make use of Pod labels in K8s. For example, the current live production deployment has a label Green, and the newly deployed code has a label Blue. By default, the Green label receives all traffic, whilst Blue is only available through certain back doors. A testing group then utilizes these back doors to confirm the Blue pods pass validation. At this point, the Green Pods retire, and Blue becomes the new Green.

Above is one example of a Deployment Strategy called Blue/Green. We are going to take a deeper dive into Blue/Green as well as two more deployment strategies: Canary and A/B Testing.

How do they work?

Istio and Yaml files. Istio is what is known as a Service Mesh, which, for the sake of this blog post, you can think of as a load balancer for the application Pods. The Service Mesh layer routes requests to Blue or Green Pods based on the configured Yaml files.

By using FlexDeploy, organizations establish an automated and repeatable process for building, packaging, and safely deploying code, APIs, meta-data changes, and data migrations from development through test to production environments.

Blue/Green

BlueGreen Deployments consists of two environments; Blue and Green. Furthermore, only one environment receives public traffic which hosts your current application. On the flip side, the other environment is not available to the public and contains new application versions being tested. At some point these environments are swapped pushing all public traffic to the new version (think light switch).

For instance, let’s say we are operating in a Blue/Green deployment strategy, of which the Istio Manifest is shown below.

apiVersion: networking.istio.io/v1alpha3

kind: Gateway

metadata:

name: superapp

spec:

selector:

istio: ingressgateway

servers:

- port:

number: 80

name: http

protocol: HTTP

hosts:

- "*"

---

apiVersion: networking.istio.io/v1alpha3

kind: DestinationRule

metadata:

name: superapp

spec:

host: superapp

subsets:

- name: green

labels:

fd_version: green

- name: blue

labels:

fd_version: blue

---

apiVersion: networking.istio.io/v1alpha3

kind: VirtualService

metadata:

name: superapp

spec:

gateways:

- superapp

hosts:

- superapp

http:

- match:

- uri:

prefix: /version

route:

- destination:

port:

number: 8080

host: superapp

subset: green

weight: 100

- destination:

port:

number: 8080

host: superapp

subset: blue

weight: 0

Among other things, we are creating two subsets of our application here (Blue and Green). However, the key takeaway is lines 43-55, where we see the Green Pod receiving 100% of traffic and Blue getting 0%. Remember, Istio always routes to Green for Blue/Green. The onus is on us to convert the Blue Pods to Green when testing is completed.

Blue/Green vs. Canary Deployment

Canary operates similarly to BlueGreen but instead of directing all public traffic to one environment, a subset of traffic can be sent to one environment or the other. As the new version becomes more stable a larger and larger percentage of the traffic can slowly be routed to the new version (think dimmer switch).

How about a more complicated example like Canary?

apiVersion: networking.istio.io/v1alpha3

kind: Gateway

metadata:

name: superapp

spec:

selector:

istio: ingressgateway

servers:

- port:

number: 80

name: http

protocol: HTTP

hosts:

- "*"

---

apiVersion: networking.istio.io/v1alpha3

kind: DestinationRule

metadata:

name: superapp

spec:

host: superapp

subsets:

- name: green

labels:

fd_version: green

- name: blue

labels:

fd_version: blue

---

apiVersion: networking.istio.io/v1alpha3

kind: VirtualService

metadata:

name: superapp

spec:

gateways:

- superapp

hosts:

- superapp

http:

- match:

- uri:

prefix: /version

route:

- destination:

port:

number: 8080

host: superapp

subset: green

weight: 70

- destination:

port:

number: 8080

host: superapp

subset: blue

weight: 30

The primary difference is that the Green Pod receives 70% of traffic, and Blue gets 30%. In addition, unlike Blue/Green, these weights are then updated as we become more comfortable with the Blue Pods. For example, a deployment with Canary could last weeks, looking like this:

[table width =”100%” style =”” responsive =”false”]

[table_head]

[th_column]Week[/th_column]

[th_column]Green Traffic[/th_column]

[th_column]Blue Traffic[/th_column]

[/table_head]

[table_body]

[table_row]

[row_column]1[/row_column]

[row_column]80%[/row_column]

[row_column]20%[/row_column]

[/table_row]

[table_row]

[row_column]2[/row_column]

[row_column]50%[/row_column]

[row_column]50%[/row_column]

[/table_row]

[table_row]

[row_column]3[/row_column]

[row_column]0%[/row_column]

[row_column]100%[/row_column]

[/table_row]

[/table_body]

[/table]

A/B Testing

A/B Testing still consists of two environments, but where it differentiates itself is how traffic is routed. Routes are determined based on certain match rules, which could be anything from specific HTTP headers or the region the request came from. This could allow everyone coming from a certain IP range to access the new application version whilst the general public still accesses the stable release.

How about A/B Testing?

apiVersion: networking.istio.io/v1alpha3

kind: Gateway

metadata:

name: superapp

spec:

selector:

istio: ingressgateway

servers:

- port:

number: 80

name: http

protocol: HTTP

hosts:

- "*"

---

apiVersion: networking.istio.io/v1alpha3

kind: DestinationRule

metadata:

name: superapp

spec:

host: superapp

subsets:

- name: green

labels:

fd_version: green

- name: blue

labels:

fd_version: blue

---

apiVersion: networking.istio.io/v1alpha3

kind: VirtualService

metadata:

name: superapp

spec:

gateways:

- superapp

hosts:

- superapp

http:

- match:

- headers:

end-user:

exact: blue-user

route:

- destination:

port:

number: 8080

host: superapp

subset: blue

- route:

- destination:

port:

number: 8080

host: superapp

subset: green

Notice the absence of the weights on Canary and Blue/Green. Instead, a match rule routes users with the HTTP header ‘end-user’ set to ‘blue-user’ to the Blue Pods. As a result, the Blue Pods are isolated to a specific group for testing. In contrast, all other traffic still goes through Green Pods.

Now that the theory is out of the way check out how Kubernetes integrates within FlexDeploy.